2 Outcome Rewards

2.1 Chapter Map

- Explain how strong outcome verifiers are built.

- Show why answer extraction, canonicalization, and hidden brittleness matter.

2.2 A Rollout

A rollout is a sample from the current policy on a prompt: the model receives an input, generates a completion or trajectory, and that sampled output is what the verifier scores.

Prompt

Solve the equation: \(x^2-5x+6=0\).

Completion

<think>

We can factor the quadratic: x^2 - 5x + 6 = (x-2)(x-3).

Set each factor to zero:

x - 2 = 0 -> x = 2

x - 3 = 0 -> x = 3

The final answer is the ordered tuple (2,3).

</think>

<answer>

(2,3)

</answer>Outcome reward check (for RLVR).

The verifier reads the checked artifact from <answer>...</answer>, normalizes to standard form (canonicalizes) the task’s answer representation, and checks it against the ground-truth set.1

\[ r(x,y)= \begin{cases} 1 & \text{if } \operatorname{canon}\!\bigl(\operatorname{extract}_{\mathrm{ans}}(y)\bigr)=\{2,3\},\\ 0 & \text{otherwise.} \end{cases} \tag{2.1}\]

If the model fails the output contract (for example, omits <answer>...</answer>, changes surface form in a way the canonicalizer does not handle, or adds extraneous text that breaks parsing), the verifier can assign an incorrect reward even when the underlying solution is algebraically correct.

2.3 How outcome verifiers are implemented

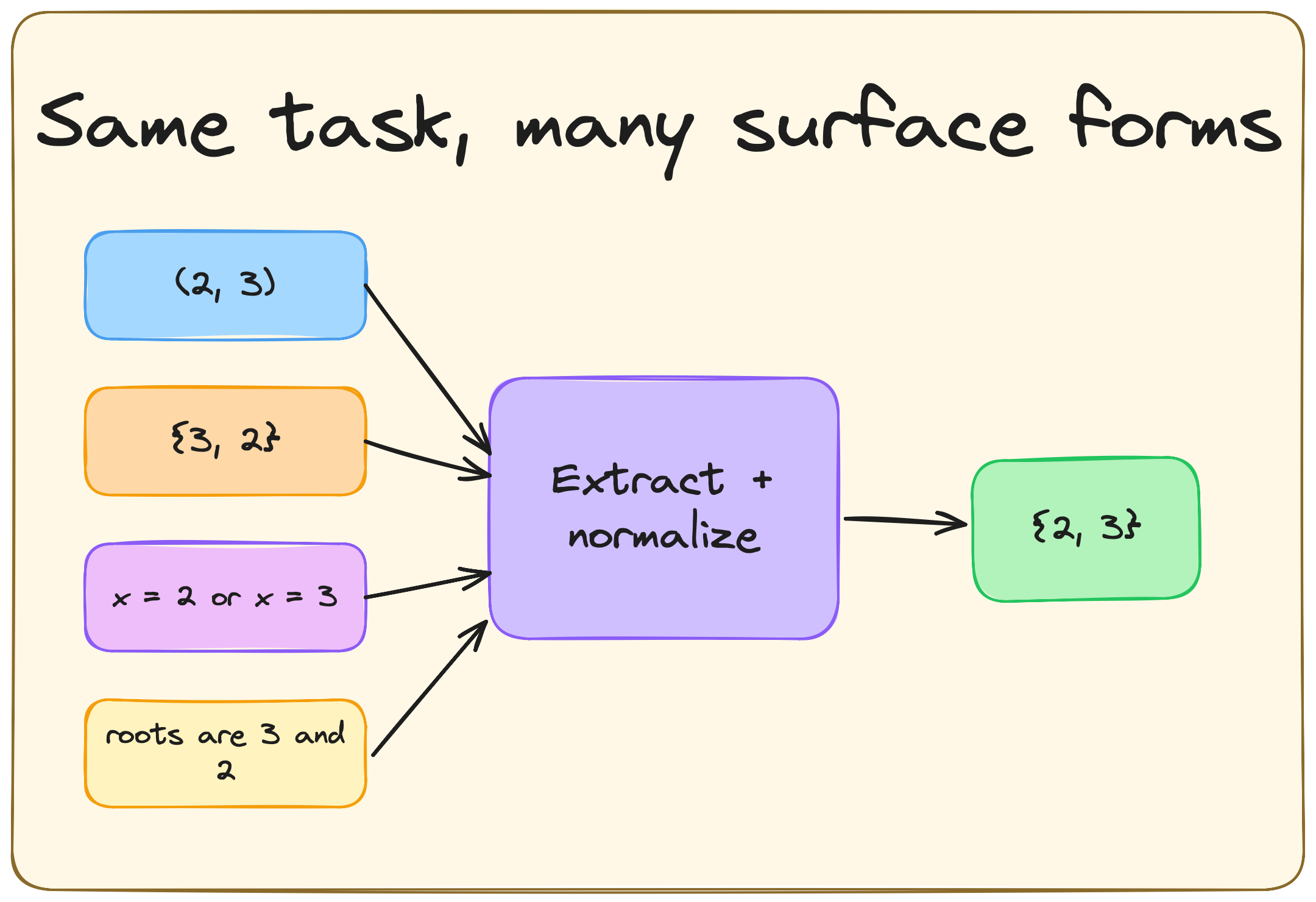

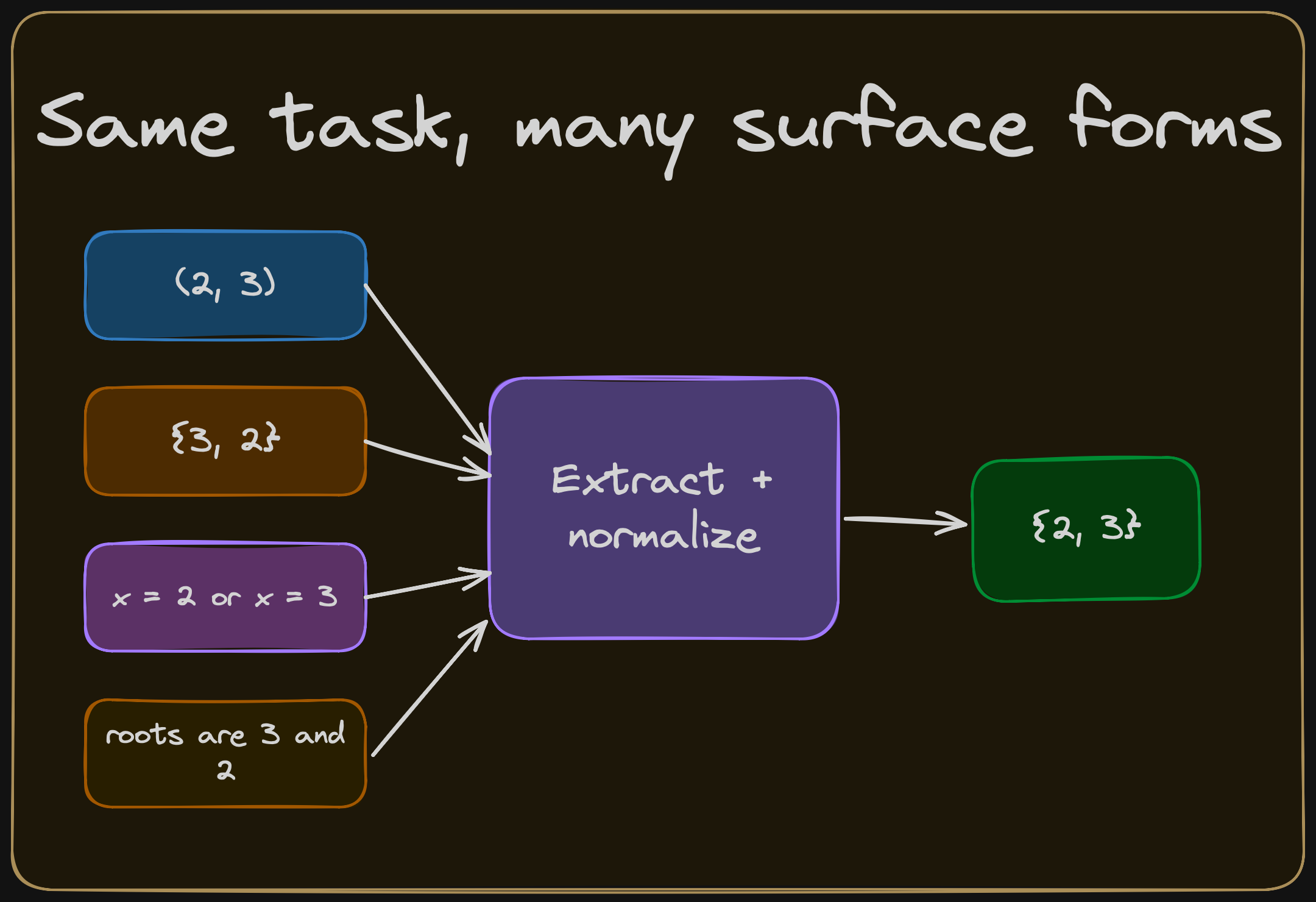

A useful abstraction of outcome-verification pipelines is in three steps:

Extract. Parse the model’s raw text to isolate the checked artifact. This depends on the output contract: the

<answer>tags in our scaffold,\boxed{}in many math benchmarks, the final code block in a generation task, or the proof term in a formal system.Canonicalize. Map the extracted artifact to a representation that is stable under harmless surface variation. In math this can mean parsing

(2,3),{3,2}, andx=2, x=3into the same set object.Reward. Assign a reward value. The simplest version is binary: 1 if correct, 0 otherwise. Partial credit for passing some but not all tests, or a continuous score from a symbolic similarity metric are possible too.

Current RLVR libraries do not have an agreed upon extract -> canonicalize -> reward interface. In practice, one usually writes or selects a task-specific reward function: in Transformer Reinforcement Learning (TRL), a reward_func; in veRL (Volcano Engine Reinforcement Learning for LLMs), a scoring function or reward manager.(Werra et al. 2020; Sheng et al. 2024) For math-style tasks, those reward functions often delegate most of the work to answer-verification libraries such as Math-Verify, whose documented grading architecture is explicit: answer extraction, conversion to a common representation, and gold comparison.(Kydlicek 2025)

A useful abstraction of what these implementations do is:

\[ \begin{aligned} a(y) &= \operatorname{extract}(y),\\ \tilde{a}(y) &= \operatorname{canon}\!\bigl(a(y)\bigr),\\ r(x,y) &= \operatorname{reward}\!\bigl(\tilde{a}(y), g(x)\bigr), \end{aligned} \tag{2.2}\]

2.4 A minimal outcome verifier

A toy math verifier for the quadratic example looks like this:

import re

ANSWER_RE = re.compile(r"<answer>\s*(.*?)\s*</answer>", re.DOTALL)

def extract_answer(completion: str) -> str | None:

match = ANSWER_RE.search(completion)

return None if match is None else match.group(1).strip()

def canonicalize_answer(answer: str) -> tuple[str, ...]:

text = answer.strip()

for ch in "{}()":

text = text.replace(ch, "")

pieces = []

for raw in text.split(","):

piece = raw.strip().replace("x =", "").replace("x=", "")

if piece:

pieces.append(piece)

return tuple(sorted(pieces))

def outcome_reward(completion: str, gold: tuple[str, ...] = ("2", "3")) -> float:

answer = extract_answer(completion)

if answer is None:

return 0.0

candidate = canonicalize_answer(answer)

return float(candidate == gold)

Where the engineering difficulty concentrates is strongly domain-dependent. In math-style RLVR, verifier design often hinges on answer-format contracts and canonicalization for deterministic parsing; in code, correctness depends heavily on the quality and coverage of the test suite; in formal proof, the core acceptance check is delegated to the proof assistant.2

2.5 Outcome check, full-trajectory update

Although verifiers only consider the outcome, the optimizer updates the entire trajectory. In REINFORCE-style algorithms (including GRPO), the scalar reward (or an advantage dervied from it) from the outcome check is used to upweight or downweight the log-probability of every token in the completion. If the answer is correct, the whole chain of reasoning that produced it becomes more likely. The converse is also true.

To quote Andrej Karpathy on his October 17, 2025 appearance on the Dwarkesh podcast (Patel 2025):

Every single one of those incorrect things you did, as long as you got to the correct solution, will be up-weighted as do more of this. It’s terrible. It’s noise. You’ve done all this work only to find a single, at the end, you get a single number of like, oh, you did correct. And based on that, you weigh that entire trajectory as like up-weight or down-weight. And so the way I like to put it is you’re sucking supervision through a straw because you’ve done all this work that could be a minute to roll out. And you’re like sucking the bits of supervision of the final reward signal through a straw. And you’re like putting it, you’re like basically like, yeah, you’re broadcasting that across the entire trajectory and using that to up or down with that trajectory. It’s crazy. A human would never do this. Number one, a human would never do hundreds of roll outs. Right. Number two, when a person sort of finds a solution, they will have a pretty complicated process of review of like, okay, I think these parts that I did well, these parts I did not do that well. I should probably do this or that. And they think through things. There’s nothing in current LLMs that does this. There’s no equivalent of it. But I do see papers popping out that are trying to do this because it’s obvious to everyone in the field.

This is the blunt instrument at the heart of outcome-based RLVR. The verifier has no opinion on individual tokens in the reasoning trace, it assigns one scalar per completion. The optimizer then spreads that number across all token-level decisions. This works surprisingly well in practice, because over many rollouts and many problems, tokens that consistently appear in correct trajectories get reinforced and tokens that appear in incorrect trajectories get suppressed. But it also means that outcome rewards cannot isolate a specific reasoning step as good or bad. That distinction is exactly what process rewards (Chapter 3) provide.

2.6 Domain specific considerations

The verifier structure in Equation 2.2 is the same across the main RLVR domains. Let’s discuss the domain-dependent difficulties:

| Domain | Checked object | Typical verifier | Main bottleneck |

|---|---|---|---|

| Math | Final answer or structured mathematical object | Answer extraction, canonicalization, symbolic equivalence, or reference comparison (Shao et al. 2024; DeepSeek-AI et al. 2025) | Output contracts and normalization |

| Code | Program, patch, or execution result | Sandboxed tests, hidden tests, timeouts, and optional static checks (Le et al. 2022; Shojaee et al. 2023; H. Liu et al. 2023) | Test coverage and flaky infrastructure |

| Formal proof | Proof term, tactic trace, or proof state | Proof-assistant kernel acceptance (Xin, Guo, et al. 2024; Xin, Ren, et al. 2024) | Search, decomposition, and formalization burden |

In math, (2,3), {3,2}, and x \in \{2,3\} should receive the same reward when the task asks for the solution set. In code, limited suites can certify incorrect programs and richer suites can change model rankings substantially.(J. Liu et al. 2023) In formal proof, final acceptance is strong, but the difficulty shifts toward theorem selection, search, decomposition, and interaction with the formal environment.

2.7 Brittleness

Despite their simplicity, outcome rewards still have failure modes:

- An extractor can reward obedience to formatting conventions more than correctness.

- A canonicalizer can fail to merge equivalent answers or merge distinct answers into one canonical form.

- A verifier can evaluate the wrong capability because the benchmark itself admits shortcuts.

- If the reward is non-binary, the model can optimize partial credit in ways that do not track the underlying task.

- In code, that can mean passing easy visible tests while failing edge cases.

2.8 Open questions

- When is binary scoring enough, and when does graded outcome feedback improve learning enough to justify the extra exploit surface it creates?

- How should hidden tests and evaluation setups be designed so that repeated training does not simply overfit to a static benchmark?

- Which output contracts and normalization schemes remain stable across model families, prompting styles, and generations of post-trained models?

- When should equivalence be defined syntactically for reproducibility, and when is semantic comparison worth the added complexity?

DeepSeek-R1 uses

<think>/<answer>separators and applies task-specific response-shape constraints for reward parsing, including boxed final outputs when useful for deterministic math verification.(DeepSeek-AI et al. 2025)↩︎This point is best supported domain by domain rather than as a single universal statistic. DeepSeek-R1 uses task-specific output-shape constraints for deterministic reward parsing in math-style reasoning tasks (DeepSeek-AI et al. 2025). EvalPlus shows that limited test suites can miss substantial amounts of incorrect code and even mis-rank models, making test quality and coverage central to code verification (J. Liu et al. 2023). For formal theorem proving, DeepSeek-Prover describes proof assistants such as Lean as providing high-accuracy, reliable proof verification, which shifts the engineering difficulty away from the final acceptance check itself (Xin, Guo, et al. 2024).↩︎