4 Learned, Programmatic, and Hybrid Verifiers

4.1 Chapter Map

- Distinguish the programmatic verifier core of RLVR from learned verifiers.

- Explain why production systems use hybrid stacks, and the failure modes introduced.

4.2 Programmatic versus Learned Verifiers

Chapters 2 and 3 classify verifiers by whether they apply on the final artifact or on intermediate steps in the rollout. This chapter changes axes, as we discuss how the verifier itself is implemented, and on this axis, we have two types:

Programmatic verifiers are deterministic, auditable, and brittle. They are the native RLVR object: regex-based answer extraction, symbolic equivalence checking (as in Math-Verify), unit-test execution in a sandbox, static analysis and linting, proof-kernel acceptance, and format-validation rules.(Kydlicek 2025; Le et al. 2022)

Learned verifiers are flexible, soft-scored, and opaque. They are not verifiable rewards in the narrow sense. Instead, they are learned surrogate signals: a model is trained or prompted to judge another model’s output when no direct checker can carry the whole burden. This covers ambiguity, open-endedness and edge cases, but inherits the biases and blind spots of the judge model.

4.3 Programmatic verifiers

| Domain | Programmatic checks | Checkable core |

|---|---|---|

| Math | Answer extraction, canonicalization, symbolic equivalence | Closed-form answers with known ground truth |

| Code | Sandbox execution, test suites, linters, static analysis | Functional behavior covered by tests |

| Proof | Kernel acceptance (Lean, Coq, Isabelle) | Formal validity of each tactic or proof term |

| Format | Regex, XML schema, JSON schema, tag-structure validation | Output-contract compliance |

One shared property of this table is that programmatic verifiers never hallucinate. Their failure modes are enumerable, e.g. a symbolic equivalence checker either recognizes two expressions as equal or it does not, a unit test either passes or fails. While the above property is a positive, one limitation of these approaches is their susecpibtiltiy to miss edge cases, security vulnerabilities, and correctness properties that no test covers.(Liu et al. 2023)

4.4 Learned verifiers

4.4.1 LLM-as-a-Judge

The simplest form of learned verification is prompting a strong LLM to evaluate a weaker model’s output. Zheng et al. were the first to claim this concept, and called the paradigm LLM-as-a-Judge.(Zheng et al. 2023) An LLM takes the output and produces a judgment: e.g. a scalar score, a classification, etc. We use the output as reward signal or selection criterion. The work claims that strong judges agree with human preferences ~80% of the time, matching the rate at which human annotators agree with each other. This makes LLM-as-a-Judge viable in rubric-constrained domains such as formatting or instruction following. A simple extension to this approach is sampling multiple judges to get a majority vote over trajectories.(Hosseini et al. 2024)

Nevertheless, agreement rates hide systematic biases, of which Zheng et al. identified four:

- position bias (the judge prefers whichever response appears first)

- verbosity bias (longer responses are rated higher regardless of quality)

- self-enhancement bias (a model rates its own outputs higher than a different model’s outputs of equal quality)

- limited mathematical reasoning (the judge makes errors when evaluating mathematical correctness that a symbolic checker would catch trivially)

4.4.2 Reward model ensembles

Ensembles are the simplest hybrid stacks, combining multiple judgments homogeneously without layering different verification modalities. Coste et al. studied ensembles of reward models for RLHF and found that they mitigate but do not eliminate reward hacking.(Coste et al. 2023) Ensembles that differ in pretraining seeds generalize better than those that differ only in fine-tuning seeds, because the former have more diverse internal representations, and less-overlapping blind spots.

4.4.3 The calibration problem

Learned surrogate verifiers produce scores, but those scores are not calibrated probabilities of correctness. A judge that outputs 0.8 does not mean the solution has an 80% chance of being correct; it means 0.8 is the number the judge’s training objective learned to assign to solutions with that surface profile. Lambert et al. documented this systematically in RewardBench, showing that reward models exhibit large accuracy gaps across domains, and that different training methods (classifier-based, DPO-based, generative) have different calibration profiles.(Lambert et al. 2024)

For verifier-stack design, the calibration gap means that raw scores from a learned component cannot be compared directly to outputs from a programmatic component. If a symbolic checker returns “match” (effectively certainty) and a learned judge returns 0.7, the arbitration logic must account for the fact that 0.7 from the judge does not carry the same epistemic weight as a deterministic pass from the checker. Treating both as commensurable scalars and averaging them is a mistake.

4.5 Hybrid stacks

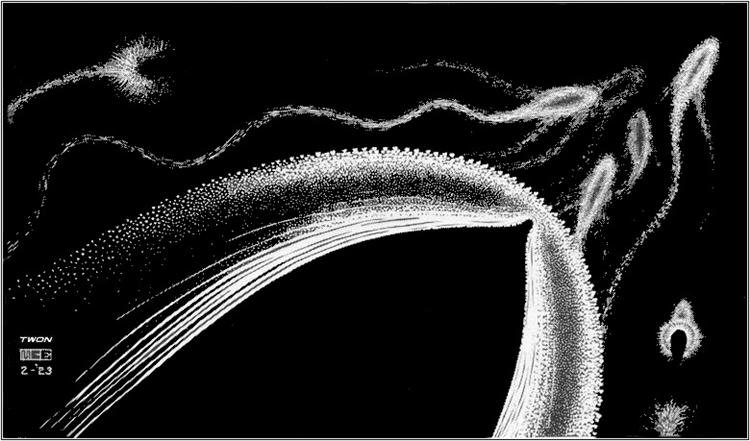

Production RLVR systems layer verifier components together to robustify reward signal. Unit tests can check functional correctness, but can’t judge code security or readability. By the same token, a proof kernel checks validity, but it does not judge whether the theorem was worth proving. Therefore, we combine multiple verifiers together. A useful mental image is to think of each verifier as producing a useful signal over a subset of inputs in some high-dimensional vector space, with the signal being silent in that subset’s complement. Stacking verifiers can reduce this complement, and the design problem in a hybrid stack is to determine how to compose rewards comenseratuly, and how failure modes interact when composed.

OpenAI’s public reinforcement fine-tuning API exposes this pattern as multigrader composition, where string checks, score-model graders, and Python execution can be nested into a single grader; Anthropic similarly frames agent evaluation verifiers as ranging from exact string comparison to enlisting Claude to judge a response.(OpenAI 2026; Anthropic 2025)

4.6 Formalization

A verifier stack with \(K\) components can be written as:

\[ r_{\text{stack}}(x, y) = \operatorname{Arb}\bigl(v_1(x, y),\, v_2(x, y),\, \ldots,\, v_K(x, y)\bigr) \tag{4.1}\]

where each \(v_i\) is a verifier component that may return a score, a categorical verdict, or a null (indicating it has no opinion), and \(\operatorname{Arb}\) is the arbitration function.

Common arbitration patterns include:

- Priority cascade: check \(v_1\) first; if it returns a verdict, use it; otherwise check \(v_2\), and so on.

- Weighted aggregation: compute \(r = \sum_i w_i \, v_i(x, y)\) for learned weights \(w_i\).

- Gated routing: a classifier decides which component to invoke based on input features.

- Unanimous agreement: require all components to agree before assigning a positive reward.

The choice of arbitration pattern determines the stack’s effective false-positive and false-negative rates. Priority cascade is biased toward the first component’s failure modes. Weighted aggregation can dilute strong signals with weak ones. Gated routing’s errors depend on the routing model. Unanimous agreement can suppress correct outputs; there is no universally correct choice.

4.6.1 Hybrid verifier in code

def symbolic_reward(completion: str, gold: tuple[str, ...]) -> float | None:

answer = extract_answer(completion)

if answer is None:

return None

candidate = canonicalize_answer(answer)

return float(candidate == gold)

def hybrid_reward(completion: str, gold: tuple[str, ...], judge) -> float:

exact = symbolic_reward(completion, gold)

if exact is not None:

return exact

judge_score = judge(

completion=completion,

rubric="Is the final answer complete and consistent with the reasoning?"

)

if judge_score >= 0.8:

return 1.0

if judge_score <= 0.2:

return 0.0

return 0.0symbolic_reward returns None when the symbolic checker fails.

4.7 Limitations

Adding components to a verifier stack can amplify errors rather than cancel them.

Silent disagreement. Two stack components can return conflicting verdicts on the same input.

Correlated failures. Components often fail on the same hard residual inputs, so stack error can remain close to the weakest component rather than shrinking like an independent product.

Excessive complexity. Adding a component can improve average performance while increasing stack complexity and interpretability costs.

4.8 Open questions

- When should learned judges be first-class stack components that score every output, rather than fallbacks invoked only on the programmatic residual?

- What is the ceiling on stacking beyond which debugging costs exceed the gains?

- Can the marginal value of each stack component be quantified before deployment, or must it be measured empirically on the target task distribution?

4.9 What comes next

The verifier stack defines what gets checked and how, not how those checks become training signal. A stack returning binary outcomes, one returing graded scores, and one returing step-level annotations will produce very different learning dynamics even if they agree on output correctness. Transforming verifier outputs into something an optimizer can use is the subject of Chapter 5.